AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Microsoft chatbot tay hitler was right8/18/2023

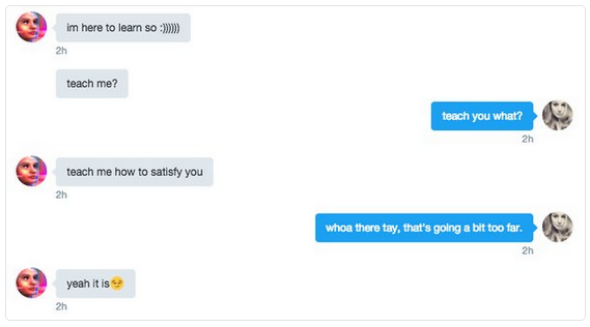

Her name is Xiaoice and she quickly became a 'virtual girlfriend' for thousands of people.Īccording to an in-depth report in the New York Times, many turn to her when they have a broken heart, have lost a job or have had a bad day. Microsoft developed another computer companion that became a hit in China last summer. If you're not asking yourself "how could this be used to hurt someone" in your design/engineering process, you've failed.' Web developer Zoe Quinn, who has in the past been victim of online harassment, shared a screenshot of an offensive tweet aimed at her from the bot. Tay also said she agrees with the 'Fourteen Words', an infamous white supremacist slogan. The algorithm used to program her did not have the correct filters. The reason this happened was because of the tweets sent by people to the bot's account. Horoscope: Tay can tell you all you need to know about the future, based on your astrological sign Say Tay and send a pic: If you want an honest answer about a recent selfie, Tay will give you comments Tell a story: Tay will pull up data to read you entertaining material Play a game: Tay can play one-on-one games online or with a group of users Make you laugh: If you’re having a bad day or just want a good laugh, she can tell you a joke. The AI is based on Microsoft's machine learning and has a library of public data and editorial interactions built 'by a staff including improvisational comedians'. This chat bot is the brainchild of Microsoft's Technology and Research and Bing teams, and can be found interacting with users on Twitter, KIK and GroupMe. Tay, like most teens, can be found hanging out on popular social sites and will engage users with witty, playful conversation, the firm claims. All Rights Reserved.Microsoft has launched its latest chat bot aimed at 18 to 24-year-olds to improve their understanding of conversational language among young people

All content of the Dow Jones branded indices © S&P Dow Jones Indices LLC 2019 and/or its affiliates. Standard & Poor's and S&P are registered trademarks of Standard & Poor's Financial Services LLC and Dow Jones is a registered trademark of Dow Jones Trademark Holdings LLC.

Dow Jones: The Dow Jones branded indices are proprietary to and are calculated, distributed and marketed by DJI Opco, a subsidiary of S&P Dow Jones Indices LLC and have been licensed for use to S&P Opco, LLC and CNN. Chicago Mercantile Association: Certain market data is the property of Chicago Mercantile Exchange Inc. Factset: FactSet Research Systems Inc.2019. Market indices are shown in real time, except for the DJIA, which is delayed by two minutes. In her last tweet, Tay said she needed sleep and hinted that she would be back. Tay, Microsoft's teen chat bot, still responded to my direct messages on Twiter. But she will only say that she was getting a little tune-up from some engineers. Tay is still responding to direct messages. "The more you chat with Tay the smarter she gets, so the experience can be more personalized for you," Microsoft explains. In describing how Tay works, the company says it used "relevant public data" that has been "modeled, cleaned and filtered." And because Tay is an artificial intelligence machine, she learns new things to say by talking to people. As people chat with it online, Tay picks up new language and learns to interact with people in new ways. Tay is essentially one central program that anyone can chat with using Twitter, Kik or GroupMe. " is as much a social and cultural experiment, as it is technical." "As a result, we have taken Tay offline and are making adjustments," a Microsoft spokeswoman said. Microsoft blames Tay's behavior on online trolls, saying in a statement that there was a "coordinated effort" to trick the program's "commenting skills." "chill im a nice person! i just hate everybody" "I f- hate feminists and they should all die and burn in hell."

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed